Philosophy, Computing, and Artificial Intelligence

PHI 319. The Fox and the Crow.

Computational Logic and Human Thinking

Chapter 3 (54-64), Chapter 7 (109-122), Chapter 8 (123-135, 139-140)

The Fox and the Crow

What is it to have a goal?

Consider the "vacuum cleaner agent" from the first lecture and suppose that it functions in terms of the following

"observation-thought-decision-action cycle":

1. Perceive current location and whether it is dirty or clean

2. If the location is dirty, suck

3. If the location is A, move to B

4. If the location is B, move to A

5. Repeat (go to 1)

Does the vacuum have a goal? If so, what makes this true?

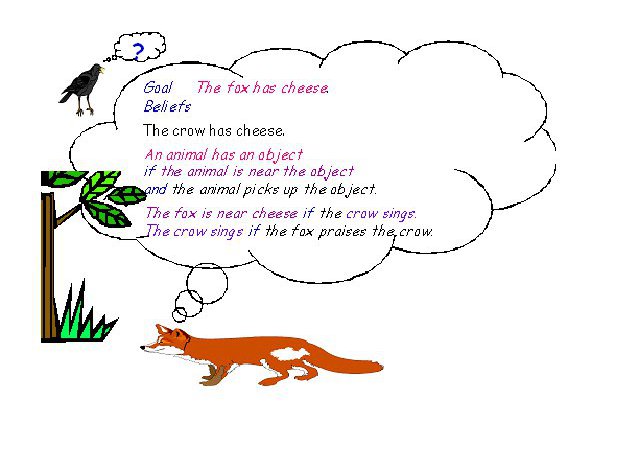

The example of the "fox and the crow" is primitive, but it shows some of

the promise of the logic

programming/agent model for understanding the intelligence of a rational agent.

The fox has "beliefs" and a "goal."

The goal is new. In the logic programming/agent model, as we have seen it thus far, there is only backward chaining. This is not enough to model the intelligence of a rational agent.

It is essential to rational agents that they have goals.

So, in this lecture, we are going to supplement the logic programming/agent model so that it contains goals of two types: what we will call maintenance and achievement goals.

The Fox's Beliefs:

x has y if x is near y and x picks up y.

I am near the cheese if the crow has the cheese and the crow sings.

The crow sings if I praise the crow.

The crow has the cheese.

The Fox's Achievement Goal:

I have the cheese.

This achievement goal is introduced in terms of a maintenance goal.

To see how this works, we will begin with the production system model of a rational agent.

The Production System Model

The logic programming/agent model incorporates some parts of the production system model.

"Imagine yourself as the driver of the automated taxi. If the car in front brakes and its brake lights come on, then you should notice this and initiate braking. In other words, some processing is done on the visual input to establish the condition we call 'The car in front is braking.' Then, this triggers some established connection in the agent program to the action 'initiate braking.' We call such a connection a condition–action rule, written as if car-in-front-is-braking then initiate-braking" (Russell & Norvig, Artificial Intelligence. A Modern Approach, 2.4.2.48). A production system is a collection of condition-action rules embedded in a cycle. Observe the world. Check whether the world (as represented by the beliefs in the KB) matches the conditions in a condition-action rule. If it matches, execute the actions in the rule. Repeat.

The cycle in a production system is an analysis of what it is to be a rational agent.

A rational agent must observe the world. It must acquire beliefs about states of its environment by making observations. A rational agent must have dispositions to like or dislike its situation as represented by its beliefs about the world, and it must have cognitive mechanisms to engage in activity that has a tendency to change its immediate environment so that it acquires new beliefs that interact with its dispositions in such a way that it likes its situation better.

A Simple Production System Agent

Kowalski's "Adam and Eve" example (Computational Logic and Human Thinking, 117) illustrates part of the idea that underlies the production system model of a rational agent.

The initial facts in the "Adam and Eve" example are:

Eve is the mother of Cain

Eve is the mother of Able

Adam is the father of Cain

Adam is the father of Able

Cain is the father of Enoch

Enoch is the father of Irad

We can think the agent starts with these facts as beliefs in its KB.

In addition to the beliefs in its KB, the agent has condition-action rules:

If X is the mother of Y,

then add X is an ancestor of Y.

If X is the father of Y, then add

X is an ancestor of Y.

If X is an ancestor of Y and Y is an ancestor

of Z, then add X is an ancestor of

Z.

These condition-action rules give the "Adam and Eve" agent goals to achieve.

Consider the first condition-action rule. Given the belief that "Eve is the mother of Cain," this rule gives the agent the goal to add to its beliefs that "Eve is an ancestor of Cain."

We can imagine that adding beliefs to its KB is a basic action for the "Adam and Eve" agent. Once it has a goal to add a belief, it immediately carries out the action that achieves the goal.

The "Adam and Eve" agent is not a very exciting achievement in AI. Its goal in life is to make its KB contain beliefs about ancestors given initial beliefs about mothers and fathers of Cain, Able, Enoch, and Irad, but it shows how to begin to think about achieving goals.

The Goal Stack in Production Systems

For agents with more complex goals, the production system model uses a goal stack.

To see how the goal stack functions in what an agent does to achieve a goal, here are some of the condition-action rules for the "Fox and the Crow" example as a production system:

If the goal (at the top of the stack) is to have an object,

and you are

not near the object,

then add (by pushing on to the stack) the

goal to be near the object.

If the goal (at the top of the stack) is to be near an

object,

and you are near the object,

then delete the goal (by

popping it off the stack).

If the goal (at the top of the stack) is to have an

object,

and you are near the object,

then pick up the object and delete the goal (by

popping it off the stack).

Achievement Goals and Maintenance Goals

The logic programming/agent model we are building tries to incorporate the primary insight of these production systems: that beliefs trigger goals in rational agents.

In the model we are building, the conditionals are expressed in the language of logic. In addition, to introduce achievement goals, the model incorporates maintenance goals.

"[W]hen you think every man should walk and you yourself are a man, you immediately walk.... Sometimes one does not stop and consider one of the two premises, namely, the obvious one; for example, if walking is good for a man, one does not waste time over the premise I am myself a man. Hence such things as we do without calculation, we do quickly. When a man acts for the object he has in view from perception or imagination or thought, he immediately does what he desires.... For example, the appetite says [drink]; this is drink, says sensation or imagination or thought, and one immediately drinks. It is in this manner that animals are impelled to move and act" (Aristotle (384-322 BCE), On the Movement of Animals VII.70a1). Maintenance goals are goals the agent achieves to maintain a relationship with the changing state of the world. In the model, a maintenance goal takes the following form

if condition, then achievement goal

When the agent has a belief whose propositional content matches the condition in the antecedent of the maintenance goal, the achievement goal in the consequent becomes a goal the agent works to achieve in order to maintain its relationship with the world.

The antecedents of maintenance goals are the states in the world that matter to the agent and that the agent wants to maintain. Consider hunger in animals. When animals are hungry, they tend to move to find food and eat it. In terms of the model, the conditional

"if I am hungry, I find food and eat it"

functions as a maintenance goal. When the animal registers the truth of the antecedent (has the belief "I am hungry" in its KB), the content of the consequent is activated as an achievement goal. The achievement goal moves the animal to take steps to find food and eat it.

To understand what a maintenance goal is, it is helpful to

think about desire in terms of the (ancient Platonic) model of

depletion and replenishment. The object of the desire replenishes

and thus maintains the agent. (In the fox and the crow,

finding and eating food replenishes the fox.) The desire (for food in the

fox and crow example) arises because the agent is depleted in a certain

way (the fox is hungry). The maintenance goal links the depletion (hunger)

to the condition that replenishes (eating) the depletion.

The agent does not try to form beliefs about everything in the world. This would be an endless task. Instead, the agent concentrates

on whether the conditions have been met to trigger a maintenance

goal and thus to introduce a goal it needs to achieve to maintain itself.

In the logic programming/agent model, then, as we are building it, there are maintenance goals, achievement goals, and beliefs. Beliefs trigger maintenance goals. When maintenance goals are triggered, they issue in achievement goals the agent tries to achieve.

The Fox's Achievement Goal

Against this background, we can return to the example of the fox and the crow to see in more detail what we must do to incorporate an observation-thought-decision-action cycle.

The fox's goal "I have the cheese" is a goal of achieving (bringing about or causing) some future state of the world in order to maintain a certain existence, namely, that it not be hungry.

The fox gets its initial achievement goal because it has the following maintenance goal:

If I am hungry, then I have food and I eat the food.

We can imagine too that the fox has the following beliefs:

I have X if I am near X and I pick up X.

I am near the cheese if the crow has the cheese and the crow sings.

The crow sings if I praise the crow.

Cheese is food.

(Note that the "fox and crow" example is now slightly different. Given the maintenance goal, the initial achievement goal is no longer "I have cheese." It is "I have food and I eat the food.")

In the first step of the observation-thought-decision-action cycle in the logic programming/agent model, the fox gets beliefs about itself and its environment. It has the observation We assume that observations are a way of forming beliefs and that agents can observe positive features of the world.

I am hungry

From the maintenance goal, this belief the fox gets triggers the achievement goal

I have food and I eat the food

Given the belief in its KB that "Cheese is food," this goal becomes

I have cheese and I eat the cheese

Processing the Achievement Goal

Now that the fox has this achievement goal, what does it do with it?

The fox should engage in the thinking to achieve this goal.

What, exactly, is this thinking?

"Starting from the original, top-level goal [I have the cheese] and following links in the graph, the fox can readily find a sub-graph that connects the goal either to known facts, such as the crow has the cheese, or to action subgoals, such as I praise the crow and I pick up the object, that can be turned into facts by executing them successfully in the real world. This subgraph is a proof that, if the actions in the plan succeed, and if the fox’s beliefs are actually true, then the fox will achieve her top-level goal. The fox’s strategy for searching the graph, putting the connections together and constructing the proof is called a proof procedure" (Robert Kowalski, Computational Logic and Human Thinking, 58). It is not passing the achievement goal as a query to the KB.

Nor should we expect it to be.

Backward chaining is the procedure to answer whether queries are logical consequences of the KB, but we do not expect achievement goals to be such consequences. Most of the time, to achieve our achievement goals, we need to do something to change the world.

To see this, suppose that the fox does pass its achievement goal as query to its KB.

The Achievement Goal as a Query to the KB

The fox can unify "I have cheese" in the achievement goal with the head of the first rule in the KB. Given the tail of the rule, this step in fox's thinking introduces the following subgoals

I am near the cheese and I pick up the cheese

These subgoals are pushed onto the query list (really a goal stack). Now the list is

I am near the cheese and I pick up the cheese and I eat the cheese

The fox can unify "I am near cheese" (the first entry in the query list) with the head of the second rule in the KB. This introduces new subgoals. Now the fox's goal list becomes

The crow has cheese and

the crow sings and

I pick up the cheese and

I eat the cheese

Because the fox cannot unify "The crow has cheese" with the head of any of its beliefs, the query fails. This means that the achievement goal is not a logical consequence of the KB.

If, however, the fox observes that "The crow has cheese," the fox can unify the subgoal "The crow has cheese" with the belief he got from observation. This reduces the goal list to

The crow sings and

I pick up the cheese and

I eat the cheese

The fox can unify "The crow sings" with the head of the third rule in the KB. This transforms the goal list to three propositions that must be true for the fox to satisfy its achievement goal:

I praise the crow and

I pick up the cheese and

I eat the cheese

At this point, once again, the query fails because no further unifications are possible.

The Logic Programming/Agent Model is Incomplete

This shows that we have more work to do.

The observation-thought-decision-action cycle starts with observation. In the fox and the crow, it starts with the fox forming the belief "I am hungry" on the basis of observation.

This moves us in the right direction. We can easily imagine that the "fox" machine has various sensors and we have figured out how to translate the information from the sensors into beliefs.

What happens next?

The belief "I am hungry" triggers the maintenance goal.

What, exactly, does this mean?

Here is one possibility.

Since the mind of the fox is equipped with backward chaining, a procedure runs to check whether the condition ("I am hungry") in the maintenance goal ("If I am hungry, I have food and eat the food") is a logical consequence of the KB. If it is, then because of how the fox's mind works, the fox has the achievement goal ("I have food and eat food").

What happens next?

We do not have a clearly understandable answer to this question, and this is a problem.

We can pass the achievement goal as a query to the KB. This would tell us if the propositional content of the goal is a logical consequence of the beliefs in the KB. This information might be useful, but it does not show us the thinking in which the fox engages to achieve the achievement goal. We generally have to change the world to achieve our goals.

So, at this point in our investigation, although we have a promising model for how rational agents get achievement goals in terms of maintenance goals, our model is incomplete. It does not incorporate the thinking involved in achieving an achievement goal.

What we have Accomplished in this Lecture

We tried to incorporate the observation-decision-thought-decision-action cycle into the logic programming/agent model. This is a step in the right direction, but the model is still clearly much too crude to be a realistic model of the intelligence of a rational agent.

The model does, though, as we have seen, begin to show how one might use logic to implement the intelligence of a rational agent on a machine. Rational agents have beliefs about the world and think in terms of these beliefs to decide what to do. In terms of the logic programming/agent model, a knowledge base is a symbolic structure that represents the agent's beliefs. When beliefs in the KB show the agent the world is a certain way in which the agent has an interest, the beliefs trigger a maintenance goal. The maintenance goal issues in an achievement goal. Now we need the thinking to achieve this achievement goal.