Philosophy, Computing, and Artificial Intelligence

PHI 319. An Extension to the Logic Programming/Agent Model.

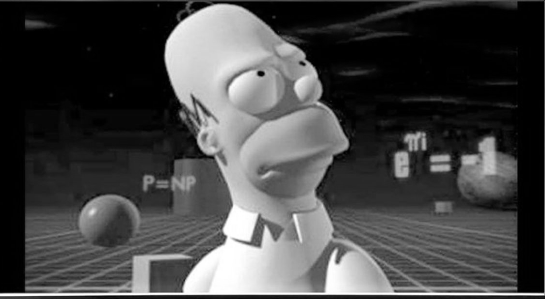

Season 7, episode 6: "Treehouse of Horror VI: Homer3"

Original air date: October 29, 1995

Whether P = NP is a problem in complexity theory.

`e^{i\pi}+1 = 0` is Euler's Identity. It is true.

Computational Logic and Human Thinking

Chapter 10 (150-159), Appendix A6 (301-317) A6

Abduction and Abductive Logic Programming

Abduction is defeasible reasoning from effects to causes.

In the case of symptoms and diseases, abduction is reasoning from the symptom (and a background theory) to the disease that explains the observation of the symptom.

Abduction is common in rational agents. Why? As we saw in the fire example when we were thinking about how one rational agent can be more intelligent than another, explanations of observations may trigger maintenance goals that the observations themselves do not trigger.

The Grass is Wet

Suppose that the agent makes the observation that the grass is wet. There are many possible explanations, but in this part of the world (Tempe, Arizona) the most likely alternatives are that it rained (which does not happen much) or that the sprinkler was on.

How does the agent reason to these explanations?

"[T]reating observations as goals extends the notion of goal, beyond representing the world as the agent would like it to be in the future, to explaining the world as the agent actually sees it. This is because the two kinds of reasoning, finding actions to achieve a goal and finding hypotheses to explain an observation, can both be viewed as special cases of the more abstract problem of finding assumptions to deductively derive conclusions" (Robert Kowalski, Computational Logic and Human Thinking, 152). One way to reason to these explanations is by reasoning backwards from the observation (treated as a query relative to the KB) with beliefs about causal connections in the form

effect if cause

effect if cause

.

.

.

effect if cause

Suppose that the beliefs about the casual connections are

the grass is wet if it

rained.

the grass is wet if the sprinkler was on.

In this KB, there are "open" and "closed" predicates.

The predicate wet is "closed." In the KB, there is a set of conditions that partially define this predicate. According to the KB, a thing is wet if it rained or the sprinkler was on.

In the KB, the predicates rained and the sprinkler was on are "open." They have no set of conditions defining them because they are not in the heads of any clauses in the KB.

"Hypotheses in the form of facts... represent possible

underlying causes of observations; and the process of generating them is

known as abduction" (Robert Kowalski, Computational Logic and Human Thinking, 151).

"In abduction, we augment our beliefs with assumptions concerning instances of open predicates"

(Robert Kowalski, Computational Logic and Human Thinking, 159).

Open predicates provide the possible hypotheses for abduction.

Finding the Explanation

Backward reasoning from the observation that the grass is wet results in two possible explanations: the grass is wet because either it rained or the sprinkler was on. The problem is to decide which explanation is best (most reasonable to believe).

In general, this decision is difficult to make.

One thing we can do is reason forward. Forward reasoning can sometimes derive additional consequences that can be confirmed by past or future observations. The greater the number of additional observations a hypothesis explains, the better it seems the explanation.

Suppose the agent observes that the skylight is wet and reasons forward from it rained to the the skylight is wet. Now the hypothesis it rained explains two independent observations, whereas the sprinkler was on explains only one. So it rained seems to be the better explanation.

Integrity Constraints

Another way to decide between possible explanations is in terms of the consistency of the explanation with observations. In the "grass is wet" example, there are two possible explanations of the grass is wet. It might be that it rained or that the sprinkler was on. Suppose, however, in thinking about which explanation is better, that the agent observes that on the clothesline outside the clothes are dry. The hypothesis that it rained is inconsistent with this observation. This inconsistency eliminates it rained as an explanation of the grass is wet.

One way to incorporate the consistency requirement is with the an integrity constraint.

Integrity constraints function like prohibitions. In the "runaway trolley" example (in the last lecture), the agent reasons forward from the items in the plan to consequences to determine if any of these consequences trigger a prohibition. If they do, the agent abandons the plan because the prohibition makes it impossible both to accept the plan and to do something right.

In the "grass is wet" example, the agent reasons forwards from possible explanations to consequences of those explanations. If any of these consequences are inconsistent with what the agent knows, the agent abandons the explanation because the integrity constraint makes it impossible both to accept the explanation and maintain the integrity of his knowledge base.

Let the integrity constraint be

if a thing is dry and the thing is wet, then false.

Now suppose the beliefs are

the clothes outside are

dry.

the clothes outside are wet if it rained.

Suppose the hypothesis is

it rained.

Forward reasoning yields

the clothes outside are wet

Forward reasoning with this consequence and the constraint yields

if the clothes outside are dry, then false

More forward reasoning yields

false

This eliminates the hypothesis that it rained as an explanation for why the grass is wet.

On the Difficulty of Certain Problems

"If P = NP, then the ability to check the solutions to puzzles efficiently would imply the ability to

find solutions efficiently. An analogy would be if anyone able to appreciate a great symphony

could also compose one themselves!" (Scott Aaronson,

"Why Philosophers Should Care About Computational Complexity").

P is the set of yes/no problems that can be

solved in polynomial time.

NP is the set of yes/no problems such that

if the answer is "yes," the proof can be checked in polynomial time.

If a problem is in P, then it is also in

NP.

co-NP is the set of yes/no problems such that if the answer is "no," the

proof can be checked in polynomial

time.

If a problem is in P, then it is also in

co-NP.

It is generally thought

that P ≠ NP,

but no one has proven that this is true. This is one of the

Millennium Problems.

(Similarly, although it is generally thought that NP ≠ co-NP, no one has proven that this true.)

For an example of P vs NP, consider the problem of finding an

interpretation that makes a propositional formula true.

(If an interpretation exists, the formula is

satisfiable. If no such interpretation exists, the

formula is unsatisfiable. If the formula

contains n atomic sentences, there 2 to the n power interpretations.)

This is the SAT problem. No one knows how to solve it in less

than exponential time by trying all the possible interpretations, but if an interpretation is supplied,

it is straightforward to verify in polynomial time that this supplied interpretation makes the formula true.

Time complexity is the estimated number of steps in an algorithm as

function of its input. Consider an algorithm to find the maximum value of an array:

1. function findMax(n) {

2. let max;

3. for (let i = 0; i < length of n; i++) {

4. if(max is undefined or max < n[i]) {

5. max = n[i];

6. }

7. }

8. return max;

9. }

How many steps in this algorithm?

There is one step (line 2), and there is a for loop of size n (line 3)

that contains 2 steps (lines 4 and 5). So 2n + 1 is the number of steps.

This algorithm, then, executes in linear time (in proportion to the input size).

In Big O notation, the time complexity for the algorithm is written as O(n).

The running time increases at most linearly (as a function of a linear equation) with the size of the input.

For some problems, it is more difficult to solve them than to verify their solutions.

Computational complexity theory classifies computational problems according to how difficult they are to solve.

This theory is beyond the scope of this class, but the basic idea is straightforward to see for some instances of

forward and backward reasoning.

"In solving a problem ... sort, the grand thing is to be able to

reason backward. That is a very useful accomplishment, and a very easy one, but people do not

practise it much. In the everyday affairs of life it is more useful to reason forward,

and so the other comes to be neglected. There are fifty who can reason synthetically

for one who can reason analytically."

"I confess, Holmes, that I do not quite follow you."

"I hardly expected that you would, Watson. Let me see if I can make it clearer.

Most people, if you describe a train of events to them, will tell you what the result would be.

They can put those events together in their minds, and argue from them that something will come to pass.

There are few people, however, who, if you told them a result, would be able to evolve from

their own inner consciousness what the steps were which led up to that result. This power is what

I mean when I talk of reasoning backwards, or analytically"

(Arthur Conan Doyle,

A Study in Scarlet, 58).

Here is a more detailed explanation of reasoning "synthetically" and "analytically."

"A primitive man wishes to cross a creek; but he cannot do so in the usual way because the water has risen

overnight. Thus, the crossing becomes the object of a problem; “crossing the creek’ is the x of this

primitive problem. The man may recall that he has crossed some other creek by walking along a fallen tree.

He looks around for a suitable fallen tree which becomes his new unknown, his y. He cannot find any suitable

tree but there are plenty of trees standing along he creek; he wishes that one of them would fall. Could he

make a tree fall across the creek? There is a great idea and there is a new unknown; by what means could he

tilt the tree over the creek?

This train of ideas ought to be called analysis if we accept the

terminology of Pappus [of Alexandria, Greek geometer, 4th century CE].

If the primitive man succeeds in finishing his analysis he may become the inventor of the bridge

and of the axe.

What will be the synthesis? Translation of ideas into actions. The finishing act of

the synthesis is walking along a tree across the creek.

The same objects fill the analysis and the synthesis; they exercise the mind of the man in the analysis and

his muscles in the synthesis; the analysis consists in thoughts, the synthesis in acts. There is another

difference; the order is reversed. Walking across the creek is the first desire from which the analysis

starts and it is the last act with which the synthesis ends"

(George Pólya (mathematician, 1887-1985),

How to Solve it).

What we have Accomplished in this Lecture

We considered abductive reasoning, its importance as part of the intelligence of a rational agent, and how we might incorporate this kind of reasoning in the logic programming/agent model of a rational agent. We also briefly considered that for certain problems it is more difficult to find their solutions than it is to verify them. We did this, as we will see in a later lecture, because the basic idea plays a role in Kowalski's solution to the Section Task.